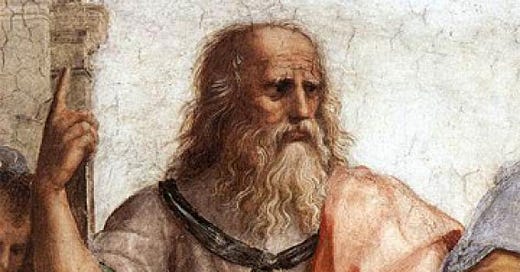

Plato said “The measure of a man is what he does with power.” I think he’s being a little imprecise. Imagine a man who would be the next Hitler but for his lack of power. I don’t think we want to call him a good man just because he never had the opportunity to be evil.

So I’m going to revise Plato a bit here and say “The measure of a man is what he would have done if he had had power.” I also want to be precise about what we mean by “had power”; I will take it to mean X has power if X is omnipotent, i.e., X can make anything happen if it is metaphysically possible.

And now that we’ve gotten Plato’s statement into the form of a nice counterfactual, we can attack it with all the machinery for dealing with counterfactuals I’ve developed over my last six posts on them. We can play around with this formulation and see what conclusions we can draw.

Let’s consider a person P, to be gender neutral. I’ll start by defining a set of sentences CP, for counterfactuals, given by CP={“If P had had power, he or she would have done X”: X is an action that makes the counterfactual true}. Then we can define a function g:CP—>[0, 1] such that g(“If P had had power, he or she would have done X”)=0 if X is not at all bad and 1 if X is maximally evil (think eternal continuous torture); g will be between 0 and 1 otherwise. I will notate this as g(X), for convenience.

Then we can ask about the value of Sup(g(CP)). We can define another function E(P) (for Evil) that maps P onto Sup(g(CP)). We can imagine E as giving us information about how bad the evilist thing P would do with power is. We can imagine someone who, say, would just spy on his crush naked—call him T, for Peeping Tom—such that E(T)=.25, for example. We can imagine another person who would be the most malevolent god imaginable with omnipotence, call him M for Malevolent, such that E(M)=1.

Notice that if we’re interested in talking about how good, rather than how evil, someone is with power we can define a symmetrical system that does that in the obvious way (exercise for the reader). As someone who’s inherently biased against people with power, I think modeling the situation in terms of evil is probably more realistic.

This formulation isn't very fine-grained, unfortunately. It doesn't differentiate between a person who would do one half-evil thing and someone who would do, say, six half evil things given power. For this reason and others utilitarians may not like this model. It also doesn't respect addition in the way utilitarian calculus should; someone who does one fully evil thing is, on this conception, worse than someone who does three half evil things. It's not clear to me we can avoid this by summing the g(X), as this runs into problems I’ve discussed before where we can’t differentiate between two divergent series in the utilitarian calculus, which creates equivalence classes we certainly don’t want. Of course, we can turn the sums finite by fiat by only discussing what people actually do with power (for a less restrictive reading of the word “power” than omnipotence) rather than what they would do, but again, it seems to me this somehow misses the point Plato was making.

But by the same token, I do admit there’s a strong moral intuition for considering actual events over hypothetical ones. We might ascribe that intuition to evidentiary concerns, since in usual circumstances it’s hard to be sure of counterfactuals. I deny that this moral intuition remains in cases where you have total, 100% certainty of hypothetical outcomes that would have happened under plausible conditions. This is, after all, the whole basis of To Catch a Predator, and who can question that show’s wisdom?

And as I’ve mentioned before, if a utilitarian is committed to the metaphysical existence of possible worlds, they have no basis to complain. Possible worlds in general create a lot of issues for utilitarians; I think they ought to deny them.

One mathematical point of interest is that since we’re working with the supremum of the set g(CP), someone who commits every imaginable evil act strictly less than totally evil (i.e. less than 1) will be such that sup(g(CP))=1, which is to say that we’re considering such a person to be just as evil as someone who does the most evil thing. Do we think that’s fair?

I think it probably is. What we’re saying is really just the moral equivalent of .999…=1, which is trivially true.

Another thing we have to address is which semantics of counterfactuals we are using in this model.

Ordinarily, as I’ve mentioned, I like the similarity analysis1. I like to require that only a large majority of the most similar antecedent worlds are consequent worlds, since that licenses many of the ordinary counterfactuals we want to be true, but the tradeoff is we give up some certainty in the hypothetical outcome, which in this case isn’t ideal, but I think the alternative is worse.

We could make a case for the strict conditional analysis2, but it seems to me the bullets we have to bite to get total certainty just aren’t worth it. On the strict conditional analysis, someone could be Hitler in 999 possible worlds out of 1000 and only slightly less bad than Hitler in the remaining world and this model might be unable to condemn them at all!

As an aside, recall that the strict conditional analysis also has problems with monotonicity.

In ordinary language, we accept that things like both of the following sentences can simultaneously be true:

“If it had rained, I wouldn’t have gone to the movies. But if it had rained and a billionaire had offered me a million dollars to go to the movies, I would have gone to the movies.”

We want to accept that discourse like this is legitimate. The strict conditional analysis fails to license this; if it’s true that “if it had rained, I wouldn’t have gone to the movies,” then in absolutely every accessible world where it rained, I didn’t go to the movies, including the one where it rains and a billionaire offers me money.

The similarity analysis has a clear solution here: the most similar worlds to the actual world where it rains obviously don’t include the ones where a billionaire happens to offer me money, and in those most similar worlds where the billionaire offers me money I do in fact go to the movies, so the two sentences can be true at the same time.

This idea that adding more detail to a counterfactual can flip its truth value is worth considering when we decide on a semantic framework.

We might plausibly also want to be able to talk about how two people with power might interact, and if that’s the case, then we need the similarity analysis. We might want to be able to say things like “If P had had power and P’ had had power, together they would have done X.” It’s plausible to imagine two people only being evil together, egging each other on and acting as a collective. On the reading of “had power” as omnipotence, I don’t think we can go there. I very much don’t want to have to consider worlds where two people can both affect any metaphysically possible change because such a thing seems frankly contradictory to me.

On the other hand, if we want to give up the precision of talking about omnipotence and talk instead about more vague forms of power, then we can plausibly change the model to license talk like this, about what P and P’ would have done together if they’d both had power. We can re-engineer the whole thing in the following way: define a set C(P&P’) in the obvious way, an analogous g:C(P&P’)—>[0, 1], and then we can talk about the supremum Sup(g(C(P&P’))) and define E(P, P’) as the function that takes the measure, Plato style, of the duo. For any arbitrary cohort (P1,…,Pn) of actors we can define an analogous model.

A shortcoming of this modified model is that it can’t apportion responsibility differently between the actors; rather, it assigns collective responsibility to the whole for each evil act. It also assumes collaboration, which is plausible for an appropriate reading of “power”. We’re not concerned with incidental happenings X that might occur if P and P’ held simultaneous power. We’re specifically talking about collective actions X between P and P’.

The obvious next project for the Plato enthusiast is to try to engineer some sort of story about the compositional semantics for combining CP and CP’ into C(P&P’). In other words, is there a way to get a true counterfactual about what the duo (trio, etc.) would have done with power collectively out of what the individual members would have done with power? As a general thing it’s probably impossible to do, but there might be some circumstances where we can get a sort of compositional semantics. I might speak more on that at some later point.

A—>B is true just in case, in the most similar possible worlds (or most of the most similar possible worlds, depending on your preference) to the actual world where A is true, B is true.

A—>B is true just in case, in all accessible possible worlds where A is true, B is true.